Meta Tightens Teen Safety on Instagram and Facebook

Meta boosts safety for teens on Instagram and Facebook with stricter controls, parental permissions, and new restrictions for under-16 users.

Meta, the company behind Instagram and Facebook is rolling out a new wave of safety measures to protect teenagers on its platforms. Building on last year’s introduction of Teen Accounts on Instagram, Meta is now expanding these protections to Facebook and Messenger aiming to create a safer digital space for young users around the world.

Teen Accounts are special profiles automatically assigned to users under 16. These accounts come with built-in safety features that limit who can contact teens and what kind of content they can see. The goal is to shield young users from inappropriate content and unwanted interactions while also giving parents more control over their children’s online experiences.

Since the launch of Teen Accounts on Instagram, Meta reports that 97% of users aged 13-15 have stayed within the stricter default safety settings. This high rate of compliance has encouraged Meta to extend these protections across its entire ecosystem, including Facebook and Messenger.

New Instagram Restrictions

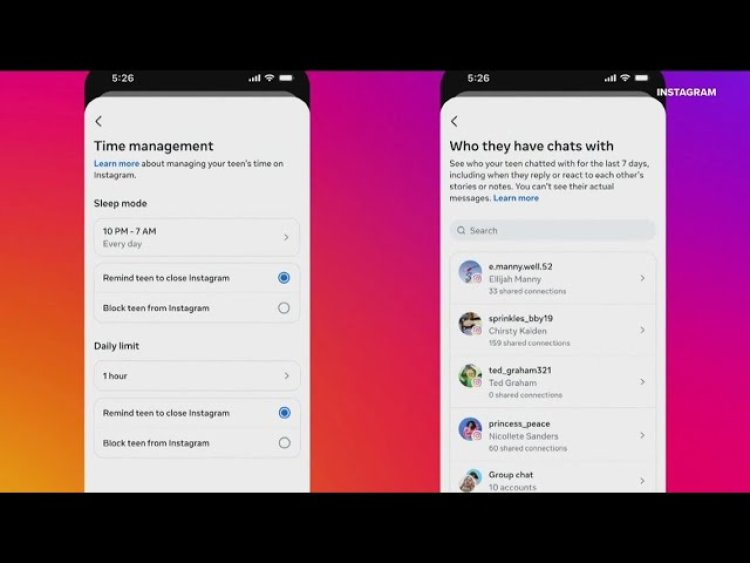

Meta is introducing even tighter controls for Instagram users under 16:

- Instagram Live: Teens will now need explicit parental permission to start a Live broadcast.

- Blurred Images in DMs: By default, Instagram automatically blurs images in direct messages that may contain nudity. Teens will now need parental approval to disable this feature.

- Rollout Timeline: These new controls are expected to be available in the next couple of months.

Facebook and Messenger: Following Instagram’s Lead

The safety features that have worked on Instagram are now coming to Facebook and Messenger. Teen Accounts on these platforms will:

- Limit exposure to inappropriate or harmful content.

- Restrict unwanted contact, so only people the teen follows or is already connected with can send messages.

- Include tools to help teens manage their screen time, such as reminders to take breaks after 60 minutes and overnight notification silencing.

The rollout will begin in the United States, United Kingdom, Australia, and Canada, with plans to expand to more countries soon.

Parental Controls and Positive Feedback

Meta’s approach places a strong emphasis on parental involvement. Any attempt by a teen to loosen the default safety settings requires explicit parental permission. According to a Meta survey, 94% of parents found these features helpful, and 85% said they make it easier to support their teens’ positive experiences online. Most parents also believe the protections make a real difference in keeping their children safe on social media.

Why These Changes Matter

With over 54 million active Teen Accounts globally since September 2024, Meta’s move is a response to growing concerns about online safety for young users. The company is trying to balance the appeal of social media with the need to protect teens from risks like cyberbullying, inappropriate content, and unwanted contact.

The Bigger Picture

Meta’s latest update is part of an ongoing effort to make social media safer for the next generation. By combining automatic protections, parental controls, and time management tools, Meta hopes to give both teens and parents the confidence to navigate the digital world responsibly.

As these new features roll out, parents and teens alike can expect a more secure and supportive experience on Instagram, Facebook, and Messenger—helping everyone focus on the positive side of social media.